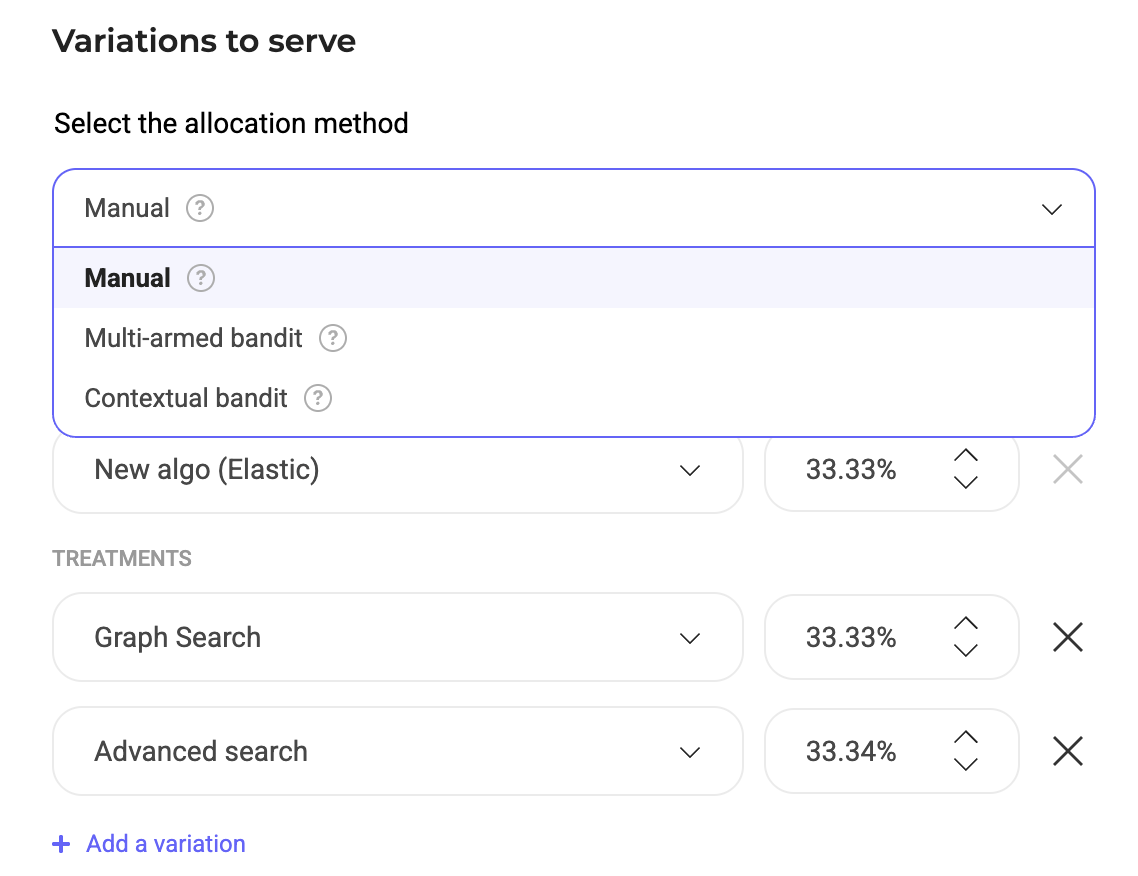

Kameleoon offers two types of dynamic traffic allocation algorithms to help maximize experiment performance: multi-armed bandits (MABs) and contextual bandits. Both approaches use real-time performance data to allocate more traffic to better-performing variations but differ in how they treat user data. This article explains how these algorithms work, when to use them, and how to activate them in your experiments. To enable dynamic traffic allocation, create a new experiment or open an existing one.Documentation Index

Fetch the complete documentation index at: https://docs.kameleoon.com/llms.txt

Use this file to discover all available pages before exploring further.

Kameleoon updates the allocation based solely on the lift of the primary goal.

Multi-armed bandits

When using dynamic allocation (such as MABs), you cannot manually edit exposure rates. Instead, Kameleoon automatically measures improvement over the original variation and estimates the gain in total conversions using the Epsilon Greedy algorithm. Kameleoon repeats this process hourly. The MAB algorithm redirects traffic to higher-performing variations, even without statistical significance, which can drastically reduce the time required to identify winning or losing variations.Auto-optimized experiments rely on the original variation (“off” for Feature Experiments) to optimize the deviations. If the original variation does not receive traffic, the deviation might not update, causing the allocation to remain at 50/50 despite a clear winning variation.

Contextual bandits

Contextual bandits dynamically optimize traffic allocation in experiments using machine learning. They adapt in real-time to redistribute traffic based on variation performance and user context to maximize effectiveness. Key differences distinguish multi-armed bandits from contextual bandits:- Multi-armed bandits: These optimize traffic distribution among multiple variations (arms) to maximize a defined goal, such as click rates or conversions. They treat all users equally, with no distinction based on user attributes. This makes them ideal for scenarios where user-specific data is unavailable or unnecessary, and the focus remains on finding the best-performing variation for the overall audience.

- Contextual bandits: These incorporate additional user-specific data—such as device type, location, or behavior—into decision-making. They facilitate more personalized decisions by tailoring variations to specific users for improved outcomes. The variability introduced by user attributes allows contextual bandits to optimize decisions in dynamic environments.