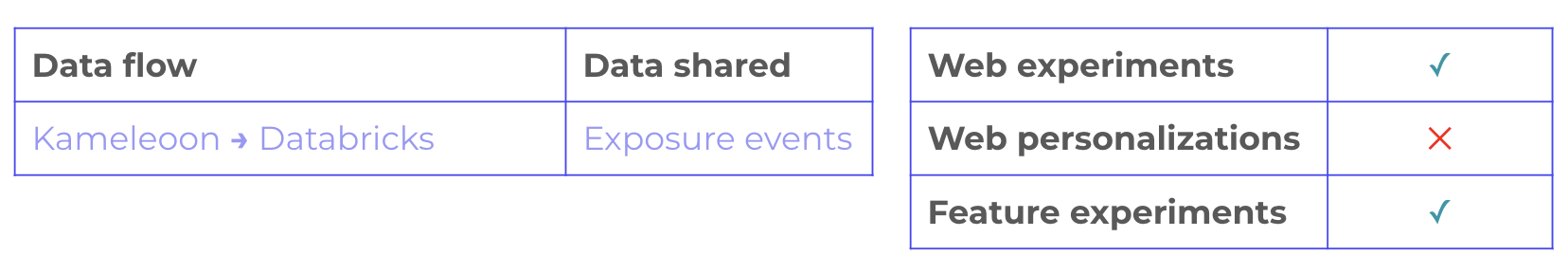

Once you have enabled the Databricks integration for your project, you can activate Use Databricks as a destination to seamlessly send events to your Databricks account whenever visitors are exposed to one of your Kameleoon experiments.Documentation Index

Fetch the complete documentation index at: https://docs.kameleoon.com/llms.txt

Use this file to discover all available pages before exploring further.

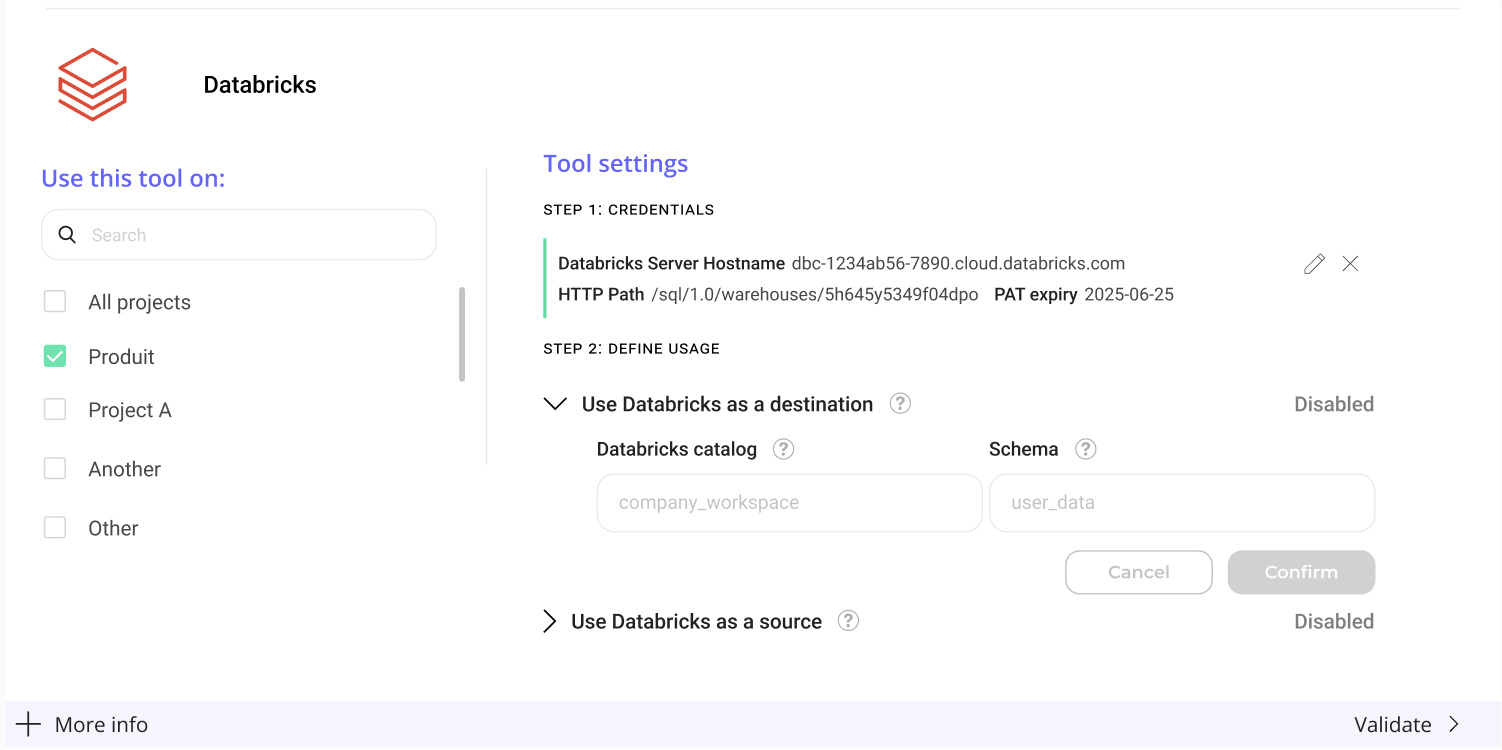

Activate Databricks as a destination

To activate Databricks as a destination:- Click Use Databricks as a destination.

- Databricks catalog: name of the Databricks “catalog” that will contain Kameleoon schema to write to (the top level folder).

- Schema: Schema containing the tables this ingestion task will query from.

What “Databricks as a destination” does

Enabling Use Databricks as a destination will stream all Kameleoon experiment exposure events into Databricks. Events will be stored in thekameleoon_events schema of your Databricks catalog — containing the tables this ingestion task will query from. This is the Databricks catalog you set up during setup, with write access granted to the Kameleoon user. The data will be saved in a table named kameleoon_experiment_event.

The SQL schema of this table is the following:

custom_visitor_id is read from the Cross Device Reconciliation custom data, if it has been set.

With campaign data stored in Databricks, you gain the ability to perform in-depth analysis and reporting. You can leverage Databricks’s querying capabilities to extract valuable insights from the collected data, helping you make data-driven decisions to optimize your campaigns and user experience.

By centralizing Kameleoon campaign results in Databricks, you contribute to the enrichment of your Databricks database, making it a comprehensive repository of user data that can be used for a variety of analytical and business intelligence purposes.